What is Website Crawler?

A website crawler is a computer program that browses the World Wide Web in a methodical, automated manner. This process is called web crawling or spidering.

#1

Audit, optimize websites, build links and grade webpages

#2

Get a detailed report of backlinks from a frequently updated database of 3.2B inlinks

#3

Generate keywords and LSI based on Google keywords search tool

#4

Generate the site crawl map to get the hierarchical structure of all the web pages and links

#5

Crawl webpages, find score & get recommendations using powerful extension

#6

Identify authors details based on specific keywords & automate outreach activity using links bot

#7

Foster Link Building Proces

#8

Instant Sitemap Generation

#9

Unbelievable pricing - the lowest you will ever find

#10

Everything your business needs - 50 apps, 24/5 support and 99.95% uptime

Web crawler is an internet bot that systematically browses the world wide web typically for the purpose of web indexing. “Web crawlers go by many names, including spiders, robots, and bots, and these descriptive names sum up what they do — they crawl across the World Wide Web to index pages for search engines” – Tianna Haas further asks the question; what is website crawler?

AIDM answered the question ""what is crawling in SEO?"" in their Tweet , stating that, When Search engines's Spider/crawler searches your website in the internet, then the process called crawling.

Ranksoldier also further emphasized in a Tweet as well that The search engine crawler is just like robots that read web pages of the website to identify that they are authoritative to rank on SERP or not. They read content and elements present on a website and check for their relevancy with one another.

Improve Search Engine Rankings with All-in-One SEO Tool? Sign Up 14 Day Trial

Web crawling software is used by web search engines and some other websites to update their web content or indices of other site’s web content. Web crawlers copy pages for processing by a web engine that highlights the downloaded pages so that users can search more efficiently.

How Website Crawlers’ Work

After finding an answer to the question; what is website crawler? how they work then becomes another thing of curiosity.

Rand Fishkin, CEO, and founder of SEOmoz states Here that ""Crawling is the discovery process in which search engines send out a team of robots (known as crawlers or spiders) to find new and updated content."" - Crawlers consume resources on visited systems and often visit sites without approval. Issues of schedule, load, and “politeness” come in to play when large collections of pages are accessed the number of internet pages is extremely large: even when the largest crawlers fall short of making a compete index in this search engines struggle to give relevant search results in the early years of the world wide web but today relevant results are given almost instantly.

Crawlers can validate hyperlinks and HTML codes. They can also be used for web scraping in data-driven programming.

Common Website Crawlers

There are hundreds of web crawlers and bots scouring the internet but below is a list of 10 common web crawlers:

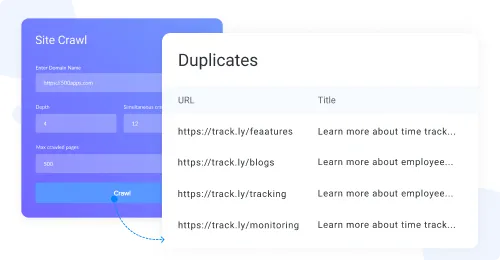

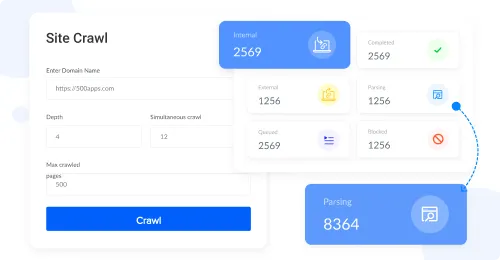

NinjaSEO

Boasting of the ability to perform an in-depth crawl of websites to find errors and opportunities, Get grades for pages according to their SEO scores as well as Save crawl reports and use it later to compare side-by-side with future reports to analyze fulfillment of your SEO strategy, ninjaseo is one of the most common website crawlers. The features and offerings of ninjaseo best explain the answer to the ""what is website crawler"" question as it properly explains what a website crawler is.

Are you looking for better ways of attracting prospective customers to your website? Ninjaseo is your best plug. It brings you a wide range of customers this leading to an increase in sales. We have a team of experts that works extensively on the technical aspects of your website, using keywords, competitors, and content that provides you with a good customer strategy for your business. If you want to bring more traffic to your website, NinjaSeo would help you achieve that. We also help your website rank well.

Bingbot

The bingbot is another website crawler which although through a more unique method performs the basic function of a website crawler I.e discover, crawl, extract, index. It is one of the top common website crawlers.

Bingbot does not only crawl the web it can also be utilized to verify if your website is in the Bing Webmaster Tools. What is Bing Webmaster Tools? Bing Webmaster Tools (Bing WMT) is a free Microsoft service that allows webmasters to add their sites to the Bing crawler so they show up in the search engine.

It also helps to monitor and maintain a site’s presence. Bing Webmaster Tools is to the Bing search engine, what Google Search Console is to Google. Bing Webmaster Tools offers you an opportunity to observe the well-being of your website and gives you a view of how your customers are discovering your website. BWTs are very easy to set up.

Bingbot is one of the largest search engines and that is one good reason why you should use it if you want your website to rank well. Check out Bing’s bot.

Slurp Bot

Slurp is the Yahoo Search robot for crawling and indexing web page information. So, do you know what Slurp Bot helps you do? It gathers amazing content from partner sites for inclusion within sites like: Yahoo Sport, Yahoo finance, and Yahoo News.

It also grants you access to pages from sites across the web to confirm the accuracy and improve Yahoo's personalized content for your users. Another beautiful feature about Slurp Bot is that it obeys the Robot Exclusion Standard. So, you can hinder Slurp from reading some portion of your site. All you need to do is create a robots.txt file in the root directory (home folder) of your site and add a rule for ""User-agent: Slurp"".

You should really consider using Slurp Bot because it has a high standard that would suit your website perfectly.

Baiduspider:

Baiduspider is a robot of Baidu Chinese search engine. It is one of the most important search engines in China. So, if China is one of your target markets, Baiduspider would help you achieve that.

This is how Baiduspider works, it usually checks the content of your website to collect information that will be used to index your pages in the search engine database.

Each time Baiduspider visits your pages it will look for specific information such as: keywords, the structure of your pages, quality of content, content updates, and others. The crawling process is divided into two steps:

- the spider crawls the page and puts it in storage and

- it creates a list of links on your page to be checked later.

With the data collected, Baidu will rank your content. On Baidu, recent or fresh content ranks better than long and detailed articles. Baiduspider allows you to block pages you don't want it to monitor. We have a team that has quite a lot of experience that can guide you if you experience any form of difficulty.

Yandex Bot:

Yandex bot is Yandex’s search engine’s crawler. Yandex ranked as the fifth largest search engine worldwide with more than 150 million searches per day as of April 2012 and more than 25.5 million visitors. It is also the largest search engine I'm Russia.

Yandexbot now crawl CSS and JavaScript content, in order to better understand the content of web pages. Yandex has a mobile-friendly label for webmasters to make sure that their website pages meet mobile-friendly guidelines. This is the best web crawler for you if you are looking for ways to make your content rank well on the web.

Sogou Spider

This is a Chinese search engine that was launched on August 4, 2004. With a rank of 121 in Alexa’s Internet rankings, Sogou provides an index of up to 10 billion web pages. This is to show you how useful Sogou Spider can be to your website. You should also consider using web crawler if you have a keen interest in the Chinese market.

It is highly recommended if you want to attract a great number of customers and definitely increase sales as a business owner.

Exabot

Exabot is a popular web crawler of France, which was founded in 2000 by Dassault SystГЁmes. This search engine pioneer is behind various mega-budget technology projects.

""Exabot"" is the User-Agent of Exalead's robot. ExaLead gives you accurate and useful information from the internet. Its basic role is to collect and index data from all around the world to supply our search engine. The Exabot agent crawls your site so that its content may be included in our main index, and hence included in our search results pages. Exabot fully complies with robots.txt and robots meta tag standards. Also, the Exalead search results are organized in order of importance for each user query. Therefore, the position of a site will change according to the search terms inserted.

Are you in need of getting accurate information from the internet? Exabot can help you achieve that quickly.

Facebook External Hit

Facebook external hit helps Facebook allows its users to send links to interesting web content to other Facebook users after successfully crawling the links provided by various users. Facebook external hit is a feed fetcher used by Facebook to retrieve details, such as: thumbnail images or video tags, associated with shared links.

Facebook allows its users to send links to interesting web content to other Facebook users. Part of how this works on the Facebook system involves the temporary display of certain images or details related to the web content, such as the title of the webpage or the embed tag of a video. The Facebook system retrieves this information only after a user provides a link.

The Facebook Crawler crawls the HTML of an app or website that was shared on Facebook via copying and pasting the link or by a Facebook social plugin. The crawler gathers, caches, and displays information about the app or website such as its title, description, and thumbnail image. Are you a business owner who is enthusiastic about Facebook? You can use the Facebook external hit to boost your business. And watch the wonderful results your business will yield.

Alexa Crawler

Alexa also has a website crawler for locating and indexing different websites. Amazonbot is Amazon's web crawler used to improve our services, such as enabling Alexa to answer even more questions for customers. Amazonbot is a polite crawler that respects standard robots.txt rules and robots meta tags.

Robots.txt is a file website administrators can place at the top level of a site to direct the behavior of web crawling robots. All of the major Web-crawlers such as Google, Yahoo, Bing, and Baidu respect robots.txt. Crawler bot is employed by Alexa, a subsidiary of Amazon that provides data and analytics on website traffic.

To identify amazonbot in the user-agent string, you'll see “Amazonbot” with additional agent information. Amazonbot supports the robots nofollow directive in meta tags in HTML pages.Once you have included this directive, Amazonbot won't follow any links on the page.

Alexa also uses the Common Crawl in order to discover backlinks and the Alexa web crawler to identify issues with your site’s SEO related to our Site Audit service. The Alexa web crawler will not index anything you would like to remain private.

The Alexa web crawler (robot) identifies itself as ""iaarchiver"" in the HTTP ""User-agent"" header field. To prevent iaarchiver from visiting any part of your site, your robots.txt file should look like this: User-agent: ia_archiver.

You Might Want to Know More About the Amazon Alexa

It is capable of voice interaction, music playback, making to-do lists, setting alarms, streaming podcasts, playing audiobooks, and providing weather, traffic, sports, and other real-time information, such as news.

Alexa can as well control several smart devices using itself as a home automation system. Users are able to extend the Alexa capabilities by installing ""skills"" (additional functionality developed by third-party vendors, in other settings more commonly called apps such as weather programs and audio features).

Alexa's ability to answer questions is partly powered by the Wolfram Language. When questions are asked, Alexa converts sound waves into text which allows it to gather information from various sources.

You can use all these features of Alexa to your own advantage and cause traffic to your website. If you're having difficulty handling customer's complaints, Alexa crawler can help simplify things for you by politely proferring solutions to your customers' problems.

Conclusion

Crawlers can be used to gather specific types of information from webpages, such as harvesting e-mail addresses. When search engines/spider/crawler searches your website on the internet, then that process is called crawling.

Bots and botnets are usually connected to cybercriminals stealing data, identities, credit card numbers, and more. But all that doesn't define what a website crawler is, bots can also serve good purposes. Getting good bots can help protect your company's website and ensure that that your site gets the required Internet traffic it deserves.

Most good bots are important crawlers sent out from the world’s biggest web sites to index content for their search engines and social media platforms. Allowing these bots to visit you can bring you good business.

If you gained value, I would love you to share this article with your friends. Are there other good bots you would like to share with us? Feel free to do that using the comment section.